Recent testing can finally help us answer the question: How accurate is ChatGPT? Recent studies reveal ChatGPT achieving 85% accuracy on medical diagnostic tasks, while specialized fields like orthodontics show more nuanced performance patterns.

Table of Contents

How Accurate is ChatGPT in 2026?

Current error rates hover around 12-18% for general knowledge questions, while specialized fields show a higher percentage. Medical diagnostic queries demonstrate approximately 25% inaccuracy rates, while orthodontic applications show more promising accuracy levels.

The most common failure modes include outdated and made-up information. However, AI hallucinations have decreased compared to earlier versions. GPT-5.2’s improved training reduces fabricated responses by roughly 40%.

Interestingly, prompt engineering affects error rates dramatically; aggressive prompting can boost accuracy.

ChatGPT Daily-Use Accuracy

The GPT benchmarks 2026 reveal that practical performance varies significantly based on user interaction patterns and application context.

Research indicates that real-world accuracy rates often fall below benchmark scores due to ambiguous queries, unclear context, and user expectations that exceed the model’s intended capabilities.

However, what’s particularly striking is how user behavior directly impacts response quality. Studies show that detailed, specific prompts can improve accuracy by up to 23% compared to vague requests.

AI Hallucinations with GPT-5.2

The latest model implements enhanced verification protocols that cross-reference information against multiple knowledge sources before generating responses. This validation reduces fabricated content by approximately 40% compared to earlier versions.

However, GPT-5 benchmarks reveal that hallucinations haven’t been eliminated. The model still struggles with highly specific technical details and recent events outside its training cutoff.

Limitations

Even with improvements in GPT-5.2, AI model accuracy is still limited by several ongoing issues. The training data stops at a certain point, causing gaps in knowledge about recent events. Also, because the model uses statistics, it might give answers that sound sure even when it’s unsure.

Research in orthodontics demonstrates that while ChatGPT performs adequately for general medical information, specialized clinical applications require careful validation.

The model also struggles with mathematical computations, logical reasoning chains, and tasks requiring real-time data verification.

Frequently Asked Questions About ChatGPT’s Accuracy

Q: What causes ChatGPT hallucinations, and how common are they?

ChatGPT hallucinations occur when the model generates confident but factually incorrect information. Current usage statistics indicate these instances affect approximately 15-20% of complex factual queries, though rates vary significantly by topic complexity and prompt specificity.

Q: Can I trust ChatGPT for professional or academic work?

ChatGPT should serve as a starting point rather than a final authority. Always verify critical information through primary sources, especially for medical, legal, or financial decisions.

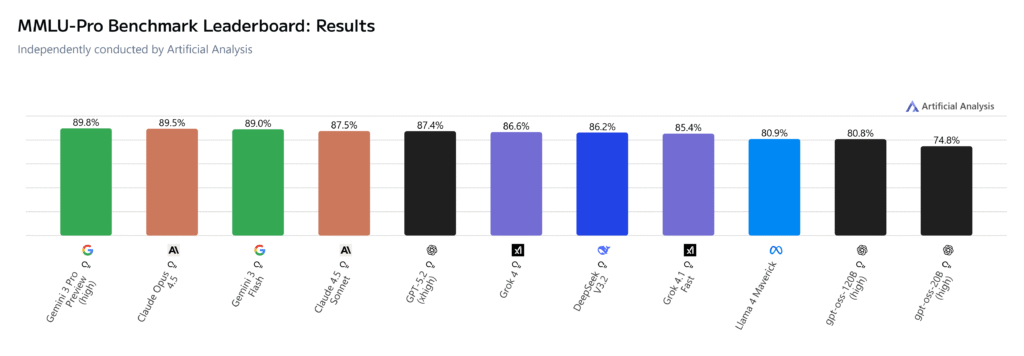

Q: How does ChatGPT compare to other AI models in 2026?

Recent comparative analyses show ChatGPT maintaining competitive accuracy rates, though some specialized models excel in specific domains. The choice depends on your particular use case and required accuracy threshold.

Q: What’s the best way to get more accurate responses?

Provide clear, specific prompts with relevant context. Request sources when possible, and consider breaking complex queries into smaller, focused questions to reduce the likelihood of compounding errors.

Key Takeaways

The MMLU benchmark and specialized testing reveal that accuracy varies dramatically across different use cases, from 85-95% for general knowledge to more variable results in technical fields.

Three essential principles define ChatGPT’s current reliability:

- Verification remains crucial for high-stakes decisions

- Prompt engineering dramatically impacts output quality

- Understanding the model’s training limitations prevents overreliance.

Leave a Reply